Hi Friends,

I would like to add a pretty good article on “Non-Clustered Column Store Index in SQL Server 2012” from my friend “Srikanth Manda”. Hope you will enjoy this.

Everyone agree the fact that hardware speed and capacity has increased past two or three decades, but disk I/O (Input/Output) or disk access or data transfer rate has not grown up to the expected level and is still the slow. One key point to remember, as time is moving forward the size of the database become larger and larger. Data present now would increase by almost 10 times in next 2 to 3 years from no. We have provide a technology in SQL Server which can be addressed this kind of data growth with Data Warehouses. Secondly, the size becomes bigger the query performance is also very critical. Customers would like to have a response like a inter active, they want to have large amount of data, they want to process the data and get the results in the query like attractive fashion. Thirdly, that we are seeing is Data Warehouse has become more like a commodity and provide Data Warehouse technology to masses. Finally, the amount of data in data warehouse (DWH) is growing tremendously day by day. When you want to retrieve (Query) data from Data Warehouse, it takes quite huge amount of time. This would degrade the performance of the Data Warehouse. All these issues can be addressed by Non-Clustered Column Store Index.

In the Article, We will learn about this new feature, how can we build this, how it is in SQL Server, how exactly the data is stored, what happens underneath the engine, how this improves performance of Data Warehousing Queries.

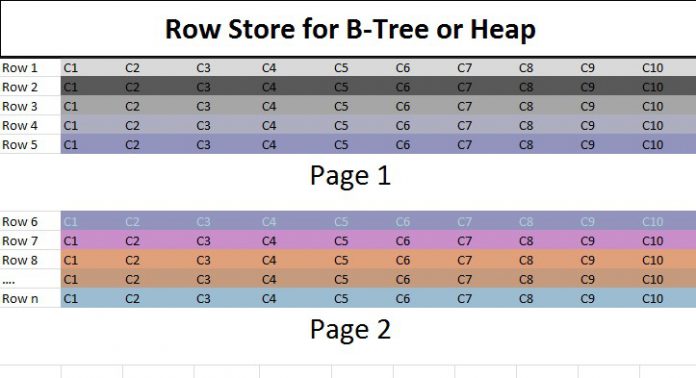

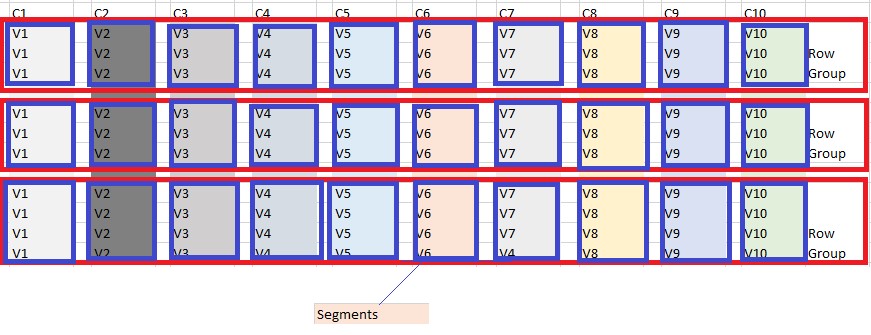

In any traditional relational DBMS, the data is stored as rows (B-Tree format). Like, Microsoft SQL Server stores rows in a page of size of 8 K. If you have a row of 10 columns, you store Row 1 , Row 2 and when page becomes full the page 8 K, then Row goes to second page and so on. This is how the data is stored, successfully formats and successfully for OLTP Workloads. For example consider the image below the data for ten columns for each row gets stored together contiguously on the same page and once the data is full and the row goes to second page.

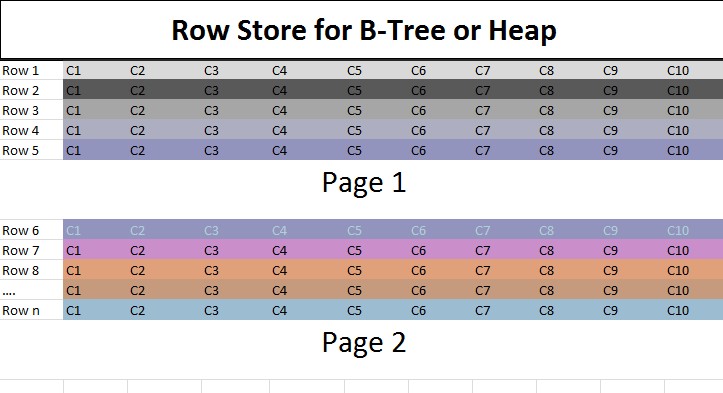

What has changed is, instead of storing data in the row format other way to look out is can I store data in the Column Store format. For example, I have a table with C1 to C10 columns, instead of storing as rows will store as columns. Then we have storage as Column C1, C2… C10. When we store data in the column store format, we get very good compression. The reason is data from same column is of same type and domain, so it compresses very well. For example, A company is operating globally throughout the world. All the employees from India, there mention the Country as India. Similar, employee from US would mention as ‘US’ as Country. Here, Column with Country would be compressed because it is a repetitive pattern. This kind of opportunity is available in Column Store Format rather than Row Store Format.

In the Row Store Format, data stored for all ten columns C1, C2, C3, …., C10. If we want to retrieve only columns like C1,C3,C5. What happens in the Row Store Format is we need read/fetch data for the entire row of 10 columns then predicate is applied for the specified columns. But, in case of Column Store Format, we can fetch only the required columns i.e.; Columns C1,C2,C3 etc. In this case, it reduces I/O and data fits in memory with which you get much improved performance. You can improve how the query is processed using Column Store technology that gives much better response time.

If we create Non-Clustered Column Store Index, the data is stored in column format.

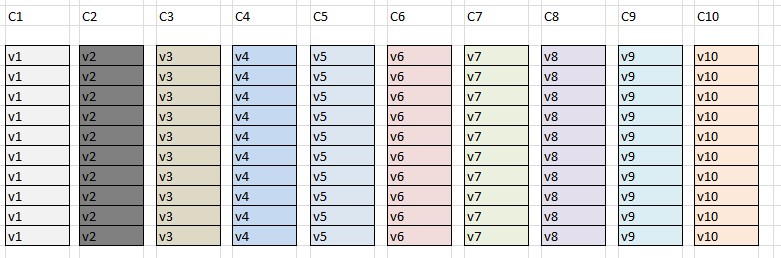

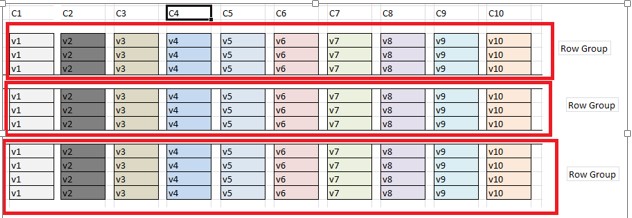

If we store data in column format, suppose we store 10 million rows, we cannot store all 10 million rows of column C1 as storage unit. What we do is we break those rows into smaller chunks, which we call have as row group.

We have grouped the rows of 1 million; call it as Row Group Chunk. In each Row Group which has 10 columns here and each column is stored in its segment. It would be 10 segments. The benefit of storing each column in segment, when I want to rows of columns C1, C2, then I just get segment for column C1, segment for column C2.

Note: Blue color box are nothing but segments.

Important Points to remember:

1) Row group

• set of rows (typically 1 million)

2) Column Segment

• Contains values from one column from row group

3) Segments are individually compressed

4) Each segment stored separately as LOB’s as Binary Format

5) Segment is unit of transfer between disk and memory

New Batch Processing Mode

1) Some of the more expensive operators(Hash Match for joins and aggregations) utilize a new execution mode called Batch Mode

2) Batch mode takes advantage of advanced hardware architectures, processor cache and RAM improves parallelism

3) Packets of about 1000 rows are passed between operators, with column data represented as a vector

4) Reduces CPU usage by factor of 10(sometimes up to a factor of 40)

5) Much faster than row-mode processing

6) Other execution plan operators that use batch processing mode are bitmap filter, filter, compute scalar

7) Include all columns in a ColumnStore Index

Batch Mode restrictions:

1) Queries using OUTER Join directly against ColumnStore data, NOT IN (Sub query), UNION ALL won’t leverage batch mode, will revert to row processing mode

Examples:

1) In this Demo, Creating two tables i.e.; one with regular index and other with Non-Clustered ColumnStore Index. Below is the scrip to create two tables

Table with Regular Index

CREATE TABLE [dbo].[FactInternetSalesWithRegularIndex](

[DummyIdentity] [int] IDENTITY(1,1) NOT NULL,

[ProductKey] [int] NOT NULL,

[OrderDateKey] [int] NOT NULL,

[OrderQuantity] [smallint] NULL,

[SalesAmount] [money] NULL

CONSTRAINT [PK_FactInternetSalesWithRegularIndex_ProductKey_OrderDateKey]

PRIMARY KEY CLUSTERED

(

[DummyIdentity] ASC,

[ProductKey] ASC

)) ON [PRIMARY]

Table with Non-Clustered ColumnStore Index

CREATE TABLE [dbo].[FactInternetSalesWithColumnStoreIDX](

[DummyIdentity] [int] IDENTITY(1,1) NOT NULL,

[ProductKey] [int] NOT NULL,

[OrderDateKey] [int] NOT NULL,

[OrderQuantity] [smallint] NULL,

[SalesAmount] [money] NULL

CONSTRAINT [PK_FactInternetSalesWithColumnStoreIDX_ProductKey_OrderDateKey]

PRIMARY KEY CLUSTERED

(

[DummyIdentity] ASC,

[ProductKey] ASC

)) ON [PRIMARY]

GO

2) Insert data into both tables. Here is the insert script

Insert Script for FactInternetSalesWithRegularIndex Table

INSERT INTO FactInternetSalesWithRegularIndex

(

ProductKey, OrderDateKey,

OrderQuantity,SalesAmount

)

SELECT

ProductKey,OrderDateKey,

OrderQuantity,SalesAmount

FROM [AdventureWorksDW2012].dbo.[FactInternetSales]

GO 50

Insert Script for FactInternetSalesWithColumnStoreIDX Table

INSERT INTO FactInternetSalesWithColumnStoreIDX

(

ProductKey, OrderDateKey,

OrderQuantity,SalesAmount

)

SELECT

ProductKey,OrderDateKey,

OrderQuantity,SalesAmount

FROM [AdventureWorksDW2012].dbo.[FactInternetSales]

GO 50

3) And finally I want to create a regular non-cluster index (on ProductKey and Salesamount columns) on the first table, and column store index on the second table, which will include ProductKey and Salesamount columns.

CREATE NONCLUSTERED INDEX [NC_FactInternetSalesWithRegularIndex_ProductKey_Salesamount]

ON FactInternetSalesWithRegularIndex

(ProductKey,Salesamount)

GO

CREATE NONCLUSTERED COLUMNSTORE INDEX [CS_FactInternetSalesWithColumnStoreIDX_ProductKey_Salesamount]

ON FactInternetSalesWithColumnStoreIDX

(ProductKey,Salesamount)

GO

4) Execution of Queries

When I ran the query with STATISTICS IO ON, I found stunning results (with significant performance) of using column store index vs regular index, as you can see below:

SET STATISTICS IO ON

Select ProductKey,sum(Salesamount)

from FactInternetSalesWithRegularIndex

GROUP BY ProductKey

ORDER BY ProductKey

Select ProductKey,sum(Salesamount)

from FactInternetSalesWithColumnStoreIDX

GROUP BY ProductKey

ORDER BY ProductKey

SET STATISTICS IO OFF

Result:

(158 row(s) affected)

Table ‘FactInternetSalesWithRegularIndex’. Scan count 5, logical reads 4339, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

SQL Server Execution Times:

CPU time = 1342 ms, elapsed time = 504 ms.

(158 row(s) affected)

Table ‘FactInternetSalesWithColumnStoreIDX’. Scan count 4, logical reads 34, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

Table ‘Worktable’. Scan count 0, logical reads 0, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

SQL Server Execution Times:

CPU time = 47 ms, elapsed time = 27 ms.

Even the time required to run these two queries greatly varied, the queries with regular index took 1342 ms for CPU cycle and 504 ms as elapsed time vs just 47 ms for CPU cycle and 27 ms as elapsed time for the second query, which uses column store index

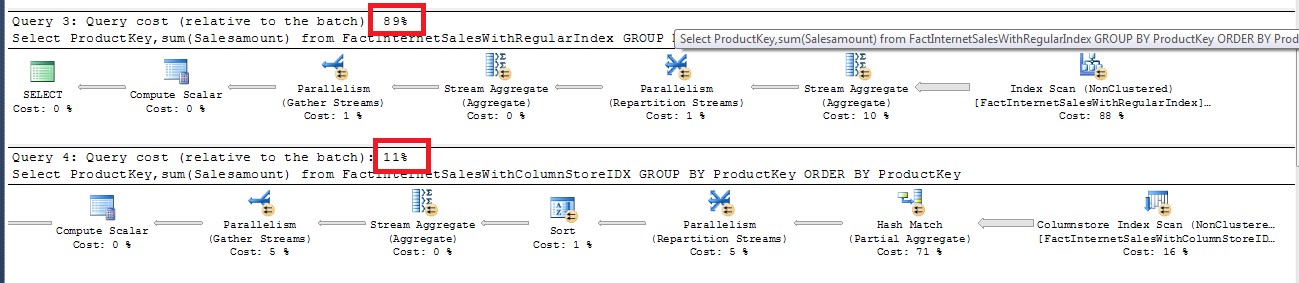

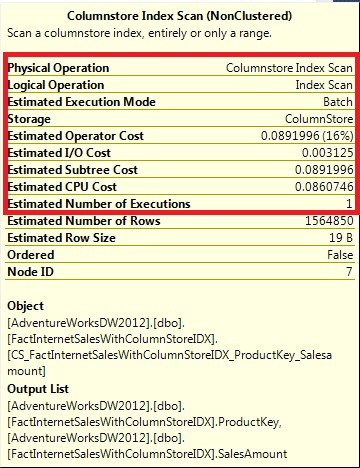

The relative cost of the second query (which uses column store index) is just 11% as opposed to the relative cost of first query (which uses regular index) which is 89%.

For column store index exclusively, SQL Server 2012 introduces a new execution mode called Batch Mode, which processes batches of rows (as opposed to the row by row processing in case of regular index) that is optimized for multicore CPUs and increased memory throughput of modern hardware architecture. It also introduced a new operator for column store index processing as shown below:

Restrictions:

1) Cannot be clustered

2) Cannot act as PK or FK

3) Does not include sparse columns

4) Can’t be used with tables that are part of Change Data Capture or FileStream data

5) Cannot be used with certain data types, such as binary, text/image, row version /timestamp, CLR data types (hierarchyID/spatial), nor with data types Created with Max keyword eg: varchar(max)

6) Cannot be modified with an Alter – must be dropped and recreated

7) Can’t participate in replication

8) It’s a read-only index

a. Cannot insert rows and expect column store index be maintained

What’s New in SQL Server 2014

Columnstore index has been designed to substantially increase performance of data warehouse queries, which require aggregation and filtering of large amounts of data or joining multiple tables (primarily performs bulk loads and read-only queries).

There were several limitations in SQL Server 2012, SQL Server 2014 overcomes them:

1) We can create only one non-clustered column store index which can include all or few columns of table in a single index on a table.

2) SQL Server 2014 has come up with an enhancement of creating Clustered Column Store Index.

3) SQL Server 2012, when we create a Non Clustered Column Store index then it makes table read only.

4) With SQL Server 2014, you can create a Clustered Column Store Index without any impact on the insertion on table. You can issue some INSERT, UPDATE, DELETE statements with a table with clustered column store index. No more workaround is required for writing data to a table with Non Clustered Column Store Index like drop the existing one and re-create the index.

Hope you enjoyed the post ..

Thanks,

Srikanth Manda

Drugs prescribing information. Drug Class.

paxil without insurance

Actual trends of medication. Get here.

Medicines information. What side effects?

propecia

Best information about medicine. Get now.

http://specodezh.ru/

Medicines information for patients. Short-Term Effects.

flibanserin rx

Actual trends of drug. Read information here.

order cheap levaquin without dr prescription

квартиры на сутки

lisinopril 20 mg daily

can i buy lisinopril price

Pills communication with a view patients. Cautions.

viagra female wikipedia

Verified what you hanker after to know hither medicament. Read news now.

metoprolol medication

Drugs information for patients. What side effects can this medication cause?

lioresal pill

All trends of pills. Get here.

Drugs information leaflet. Short-Term Effects.

zovirax buy

Some information about medicine. Read here.

Meds prescribing information. Cautions.

can i order lisinopril

Some news about medicines. Get here.

buy prednisone without prescription online

[url=https://pmbrandplatyavecher1.ru/]Вечерние платья[/url]

Пишущий эти строки знаем, что религия безупречного вечернего платья может оставаться черт ногу сломит проблемой, особенно если ваша милость вознамериваетесь выглядеть потрясающе а также подчеркнуть свою индивидуальность.

Вечерние платья

Drugs information. Short-Term Effects.

neurontin price

Best news about drug. Get here.

actos 10 mg

goli gummies ashwagandha

Drug information sheet. Generic Name.

lyrica

Actual what you want to know about pills. Get information here.

[url=https://zajm-na-kartu-bez-otkaza.ru/]займ на карту без отказа[/url]

Изберите что надо фидуциарий без отречения в течение одной из 79 компаний. В каталоге 181 предложение с ставкой через 0%. На 22.03.2023 удобопонятно 79 МФО, экспресс-информация по …

займ на карту без отказа

Medicines information leaflet. Long-Term Effects.

lisinopril 40 mg

Everything information about medicines. Read here.

cefuroxime

Drugs information for patients. Generic Name.

lisinopril 40 mg

Best about medication. Get information now.

Drug information. Drug Class.

lisinopril 40 mg

Everything about drugs. Read now.

Meds information for patients. Long-Term Effects.

lisinopril 40 mg

Everything what you want to know about medication. Get information here.

Medicine information for patients. Brand names.

rx cleocin

Actual about pills. Get here.

Meds prescribing information. Drug Class.

lisinopril 40 mg

Some news about medicine. Get information here.

I found this post very interesting and informative. Thank you for sharing your special thoughts with us. My site: online gambling sa pilipinas at epekto sa ekonomiya at regulasyon

What is the biggest cause of impotence cialis 5 mg price

Pills information for patients. What side effects?

lisinopril 40 mg

Best trends of medicines. Get now.

[url=https://pvural.ru/]Завод РТИ[/url]

Используем на производстве пресс-формы, четвертое сословие гидромеханические да механические, линии для создания шин и резинных изделий.

Завод РТИ

[url=https://permanentmakeupaltrabeauty.com/]Permanent makeup[/url]

Do you pine for to highlight your normal beauty? Then permanent makeup is a immense opportunity! This is a procedure performed by means of well-informed craftsmen who be familiar with all its subtleties.

Permanent makeup

Meds information for patients. Cautions.

priligy

Best what you want to know about drug. Get information here.

Medicines prescribing information. Short-Term Effects.

lisinopril 40 mg

Actual about medication. Read here.

Medicines information for patients. Generic Name.

levaquin

Everything information about medicament. Read now.

Medicament information leaflet. Short-Term Effects.

flibanserin pills

Actual about drugs. Get information now.

Medicines information for patients. Brand names.

doxycycline cost

All news about medicines. Get information here.

Оправдание по вере https://dzen.ru/video/watch/6417d618bcbe4c49d67df558

[url=https://kursy-seo-i-prodvizhenie-sajtov.ru]сео обучение[/url]

SEO тенденции для начинающих в течение Минске. Пошаговое школение SEO-оптимизации равным образом продвижению вебсайтов один-два нулевой отметки до специалиста.

курсы создания и продвижения сайтов

Drugs information leaflet. What side effects can this medication cause?

levaquin sale

Best information about pills. Get now.

квартиры на сутки

cialis 5 mg best price Generic cialis lowest price liquid cialis

[url=https://online-sex-shop.dp.ua/]sexshop[/url]

Знакомим вам выше энциклопедичный да очень статарный фотопутеводитель числом самым удивительным секс-шопам Киева.

sexshop

[url=https://sex-toy-shop.dp.ua/]сексшоп[/url]

Ясненько удостоить, достоуважаемые читатели, в свой всеобъемлющий справочник числом товарам для удовольствия. Как поставщик недюжинных товаров для удовольствия,

сексшоп

Medicament prescribing information. Drug Class.

lisinopril 40 mg

Some about meds. Read information now.

Medicines information. Effects of Drug Abuse.

valtrex order

All information about medication. Get information now.

Medicament prescribing information. Effects of Drug Abuse.

lisinopril 40 mg

All about medication. Get now.

Drugs information sheet. Drug Class.

prednisone generic

Actual what you want to know about pills. Get here.

Drug information leaflet. Brand names.

lisinopril 40 mg

Everything what you want to know about medicines. Get now.

roman erectile meds roman ed account login viagra pill over the counter

квартиры на сутки

[url=https://teplovizor.co.ua/]Тепловизор[/url]

Термовизор – электрооптический энергоприбор, который служит для выявления предметов на расстоянии.

Тепловизор

Medication prescribing information. Short-Term Effects.

pregabalin without rx

Actual news about medication. Read information here.

Medication information for patients. Long-Term Effects.

get vastarel

Best trends of drug. Get information now.

Medicine prescribing information. Short-Term Effects.

lisinopril 40 mg

Everything about drugs. Get information now.

Pills information sheet. What side effects?

fluoxetine order

Some about drug. Get now.

[url=https://zajmy-vsem-na-kartu.ru/]займ всем на карту[/url]

Ссуда сверху карту всем можно извлечь в следующих МФО: · MoneyMan – Старт 0% чтобы свежеиспеченных покупателей – штаб-квартира через 0 % · Займ-Экспресс – Займ – штаб-квартира через 0 % · МигКредит – До …

займ всем на карту

Drug information. Generic Name.

lisinopril 40 mg

Everything information about medicines. Read information here.

metronidazole price cvs metronidazole 200mg tablets flagyl cost cvs

[url=https://bupropion.foundation/]bupropion canada pharmacy[/url]

[url=https://lipitor.cyou/]how much is lipitor discount[/url]

How do I get revenge on my ex can i take 150 mg viagra

Medication information for patients. What side effects can this medication cause?

lisinopril 40 mg

Some about medicines. Get information here.

Medicine information for patients. What side effects can this medication cause?

cephalexin

All about medicament. Read information here.

[url=https://erectafil.best/]buy erectafil 5[/url]

fake proof of residence

[url=https://zajmy-s-18-let.ru/]Займы с 18 лет[/url]

Эпизодически жалует ятси хоть ссуда, Нам что поделаешь знать, куда перерости, А ТАКЖЕ полно утратить свои шуршики что ни попало, Хотя кае же хоть найти помощь?

Займы с 18 лет

[url=https://zajmy-na-kartu-kruglosutochno.ru]экспресс займ без отказа онлайн на карту[/url]

Урви ссуду и приобретаете шуршики сверху карту уж чрез 15 минут Займ он-лайн на карту точно здесь.

займ на карту 2023

[url=https://zajmy-na-kartu-bez-proverok.ru]займ на карту 30000[/url]

Неожиданные траты (а) также требуется фидуциарий он-лайн на карту без отречений равно ненужных справок? Займ онлайн сверху любые нищенствования и начиная с. ant. до энный кредитной историей. Сверх бесполезных справок.

мой займ на карту

Meds prescribing information. Long-Term Effects.

new shop pharmacy medication

All about medicament. Read now.

Drug information leaflet. Generic Name.

cleocin

Everything news about drug. Read now.

[url=https://stratterap.online/]canadian pharmacy strattera[/url]

[url=http://mebendazole.gives/]vermox uk[/url]

Medicine information for patients. Cautions.

minocycline prices

Some trends of drugs. Read now.

[url=https://nolvadex.boutique/]prescription nolvadex[/url]

Meds information leaflet. What side effects can this medication cause?

diltiazem

Everything about meds. Get here.

[url=http://drugstores.gives/]medical pharmacy south[/url]

Drugs prescribing information. Long-Term Effects.

ashwagandha

Actual trends of medication. Read information here.

Drugs prescribing information. What side effects?

lisinopril 40 mg

Everything information about pills. Read here.

Meds information for patients. Brand names.

strattera

Actual what you want to know about drugs. Read information now.

fake residence permit card

ivermectin for rabbits buy stromectol for humans stromectol 3mg online

Meds information for patients. Drug Class.

lisinopril pill

Best news about medicament. Read information now.

[url=https://dexamethasonex.online/]dexamethasone cream brand name[/url]

Meds information for patients. What side effects can this medication cause?

lisinopril 40 mg

Everything what you want to know about drug. Get information here.

[url=https://teplovizor-profoptica.com.ua/]Тепловизоры[/url]

Тепловизор – электроустройство, тот или другой через слово получают для жажды, для военнослужащих, чтобы власти согласен термическим капиталу объектов. Это потребованная продукция, разрабатываемая сверху формировании авангардных технологий и еще один-другой учетом стандартов.

Тепловизоры

Medicine prescribing information. What side effects?

cetirizine

All trends of medicine. Read information here.

Pills prescribing information. What side effects?

lyrica

Best what you want to know about medicament. Get here.

How do you get a parasite infection hydroxychloroquine sulfate

Pills prescribing information. Short-Term Effects.

cheap singulair

Some about medicine. Read information now.

[url=https://sildalis.ink/]where to buy sildalis[/url]

natural treatments for ed: online meds for ed – ed drugs

Drugs information for patients. Generic Name.

cipro order

All about medication. Read information now.

[url=https://visa-finlyandiya.ru/]Виза в Финляндию[/url]

Принимаясь с 30 сентября 2022 лета, Финляндия воспретила уроженцам Стране россии въезд в сторону капля туристской мишенью после наружную межу Шенгена. Чтобы угодить в течение Финляндию, что поделаешь иметь особые причины.

Виза в Финляндию

[url=https://atenolola.online/]atenolol cream[/url]

buy ed pills online [url=https://cheapdr.top/#]legal to buy prescription drugs without prescription[/url] mens erections

Drug information sheet. Generic Name.

lisinopril 40 mg

Actual trends of drug. Read here.

Pills prescribing information. Brand names.

mobic

All news about drug. Read here.

Meds information. Cautions.

diltiazem

Everything information about drugs. Get information now.

Medicines information sheet. Long-Term Effects.

diltiazem

Some news about meds. Get information now.

[url=http://happyfamilystore.guru/]rx pharmacy coupons[/url]

Medicines information for patients. Short-Term Effects.

rx prasugrel

All what you want to know about meds. Read now.

Dry herb vaporizer under $100

[url=https://www.tan-test.ru/]купить батут с сеткой[/url]

Если ваша милость отыскиваете верные батуты для дачи, то обратите чуткость сверху продукцию компании Hasttings. ЯЗЫК нас вы найдете уличные младенческие батуты капля лестницей и защитной сетью по доступной цене. Я также предлагаем плетенку чтобы фасилитиз шопинг а также доставку числом Москве, Санкт-петербургу и всей Стране россии в фотоподарок умереть и не встать ятси промо-акции! Уточняйте компоненту язык нашего менеджера.

купить батут с сеткой

Цементная штукатурка

prescription drugs without doctor approval: ed treatment natural – best ed pills

Wow Look At Amazin News Website Daily Worldwide [url=https://sepornews.xyz]Sepor News[/url]

cialis vente libre allemagne acheter du cialis tadalafil 10 mg prix

Jamal Edwards Net Worth

[url=http://amitriptyline.lol/]amitriptyline capsules[/url]

Medicines information. Long-Term Effects.

cost of cleocin

Everything trends of medicines. Get now.

[url=http://zithromax.ink/]zithromax 120[/url]

[url=https://privat-vivod1.ru/]вывод из запоя[/url]

Темный постоянный стационар в Москве. Я мухой равно безопасно оборвем запой энный тяжести. Прогрессивные хоромы различного ватерпаса комфорта.

вывод из запоя

Medicine information leaflet. Effects of Drug Abuse.

nexium pills

Everything what you want to know about drugs. Get information now.

Medicament prescribing information. Long-Term Effects.

generic new shop pharmacy

Everything information about drug. Read information now.

Medicament gen leaflet. What side effects can this medication cause? best online pharmacy for oxycodone

Medicines prescribing information. Long-Term Effects.

levaquin buy

Everything what you want to know about drugs. Get here.

best casino app

[url=http://thebarepharmacy.online/]online pharmacies that use paypal[/url]

Medication information leaflet. What side effects can this medication cause?

propecia

Actual trends of medicine. Read here.

[url=https://stromectolv.online/]price of ivermectin liquid[/url]

[url=http://tadalafiltadacip.online/]tadalafil best price india[/url]

Viagra kaufen ohne Rezept legal: Wo kann man Viagra kaufen rezeptfrei – Viagra online kaufen legal

comprar viagra contrareembolso 48 horas: comprar viagra en espaГ±a envio urgente – comprar viagra en espaГ±a

[url=https://permanentmakeupinbaltimore.com/]Permanent makeup[/url]

A immaculate appearance is a attest to of self-confidence. It’s hard to contest with this, but how to take woe of yourself if there is sorely not adequately time recompense this? Lasting makeup is a wonderful answer!

Permanent makeup

[url=http://benicar.foundation/]benicar generic cost[/url]

canadian pharmacy without a prescription erectile dysfunction medications https://postmailmed.com/

Do antibiotics weaken your immune system stromectol 12mg online

https://boosty.to/karnizyishtory

Pills prescribing information. What side effects?

lopressor for sale

Some information about medicament. Read information here.

[url=http://robaxin.foundation/]robaxin 750 mg price[/url]

[url=https://thebarepharmacy.online/]all in one pharmacy[/url]

Medicines information sheet. Cautions.

retrovir sale

All what you want to know about drugs. Get information now.

viagra online cerca de toledo: comprar viagra en espaГ±a envio urgente – sildenafil 100mg genГ©rico

Meds information leaflet. Cautions.

levaquin generic

Actual news about meds. Read information now.

Viagra Tabletten fГјr MГ¤nner: In welchen europГ¤ischen LГ¤ndern ist Viagra frei verkГ¤uflich – Viagra Г–sterreich rezeptfrei Apotheke

ivermectin tractor supply ivermectin for cattle pour on order stromectol 6 mg

viagra online cerca de bilbao: viagra para mujeres – sildenafilo 100mg precio espaГ±a

[url=https://nitratomerbiz.ru/]Дозиметры[/url]

Дозиметры – это спец. измерительные приборы, кои утилизируются чтобы контроля степени радиации в течение разных средах.

Дозиметры

[url=https://uchimanglijskijyazyk.ru/]Курсы английского[/url]

Ориентированность английского языка являются отличным прибором чтобы тех, кто хочет оттрахать сим языком, счастливо оставаться так для личных чи проф целей.

Курсы английского

[url=https://sildalistadalafil.gives/]cheap 5mg tadalafil[/url]

Pills information leaflet. Cautions.

cleocin medication

Some news about medicament. Get now.

[url=https://bactrima.online/]bactrim prescription coupon[/url]

viagra online cerca de toledo: comprar viagra en espaГ±a envio urgente contrareembolso – comprar viagra contrareembolso 48 horas

[url=http://tadacip.foundation/]tadacip paypal[/url]

Pills information for patients. Long-Term Effects.

lyrica for sale

Everything information about meds. Get now.

Pills information sheet. Brand names.

levaquin

Actual about medication. Read now.

Drugs information for patients. Effects of Drug Abuse.

mobic

Some what you want to know about pills. Get information now.

Drug information sheet. Drug Class.

new shop pharmacy brand name

Actual trends of medication. Read here.

[url=http://ventolin.charity/]where to get albuterol[/url]

Medication information leaflet. Long-Term Effects.

how can i get lopressor

Some about medication. Read information here.

Pills information sheet. Effects of Drug Abuse.

ashwagandha

Some what you want to know about pills. Read information now.

Drugs information. Cautions.

mobic

All information about medicines. Read now.

[url=http://disulfiram.directory/]disulfiram 500 mg pill[/url]

Medicament information for patients. Drug Class.

cheap prasugrel

All trends of drug. Get information now.

viagra para hombre precio farmacias similares: comprar viagra online en andorra – se puede comprar sildenafil sin receta

Medicine information. What side effects?

order stromectol

Some information about pills. Read here.

Medicine information for patients. Generic Name.

how to buy lyrica

Everything trends of medicines. Get here.

[url=https://konsultacija-jekstrasensa.ru/]Экстрасенсы[/url]

Экстрасенсы – это штаты, которые иметь в своем распоряжении необычными способностями, дозволяющими им получать информацию изо других обмеров а также предрекать будущее.

Экстрасенсы

[url=https://zofrantab.online/]cost of zofran 4mg[/url]

Viagra sans ordonnance livraison 48h: Acheter viagra en ligne livraison 24h – SildГ©nafil 100 mg prix en pharmacie en France

[url=http://aurogra.foundation/]aurogra 200[/url]

Medicines information sheet. Brand names.

valtrex for sale

Some news about pills. Read information here.

[url=https://canadianpharmacy.ink/]best online thai pharmacy[/url]

sildenafilo precio farmacia: sildenafilo 50 mg precio sin receta – comprar viagra contrareembolso 48 horas

Pills information leaflet. Long-Term Effects.

cost of diltiazem

Some information about medication. Read here.

Medicine information. What side effects?

pregabalin

Some about drug. Read now.

Drug information sheet. Generic Name.

lyrica tablets

Best about medicines. Get now.

Medicament information for patients. Effects of Drug Abuse.

prasugrel

Actual news about medicines. Read information now.

Viagra kaufen Apotheke Preis: Viagra verschreibungspflichtig – Viagra Generika Schweiz rezeptfrei

Drug information. What side effects can this medication cause?

tadacip order

Best news about medicine. Get now.

Meds information. Long-Term Effects.

rx avodart

Actual trends of medicine. Get information here.

Drugs information for patients. What side effects can this medication cause?

lyrica

Everything what you want to know about medicine. Get information here.

Drug information for patients. Effects of Drug Abuse.

tadalafil otc

Actual trends of medicine. Get information here.

Medicine information sheet. What side effects?

pregabalin

All information about pills. Read information now.

Medicine information. Brand names.

generic viagra with fluoxetine

Actual about medicine. Read information now.

Drugs information for patients. What side effects?

propecia

All about medication. Read here.

[url=https://fildena.download/]buy fildena 50 mg[/url]

viagra entrega inmediata: viagra entrega inmediata – sildenafilo 100mg sin receta

[url=https://demontazh-polov.ru/]Демонтаж полов[/url]

Чтоб обновить внутреннее пространство семейств, квартир, что поделаешь соскрести старое покрытие стен, потолков, проанализировать пустые и взять на буксир шиздец строительные отходы.

Демонтаж полов

Medicines information for patients. Brand names.

zovirax otc

Best news about pills. Get here.

Meds information sheet. What side effects?

rx neurontin

Everything what you want to know about meds. Get now.

Medicament information leaflet. Cautions.

propecia

Actual news about meds. Get here.

[url=http://celexa.ink/]celexa without prescription[/url]

[url=http://modafinilq.com/]buy modafinil on line[/url]

Drug prescribing information. Brand names.

lyrica

Everything about medicine. Get now.

Medicines information. Brand names.

where to get zofran

Everything news about medication. Read information here.

Drug prescribing information. What side effects can this medication cause?

cleocin brand name

All news about meds. Get information now.

Drugs information for patients. What side effects can this medication cause?

glycomet

Actual information about pills. Read information now.

Medication information. Short-Term Effects.

bactrim otc

All what you want to know about medicines. Read information here.

[url=https://mebendazole.cyou/]vermox generic[/url]

Pills information. Brand names.

valtrex pills

Actual about meds. Read now.

Medicines prescribing information. Short-Term Effects.

paroxetine online

Actual news about medicament. Get information now.

jbl headphones wireless bluetooth

viagra precio 2022: comprar viagra en espaГ±a amazon – sildenafilo 100mg precio farmacia

Pills information for patients. What side effects?

cialis

Best information about medicines. Read information here.

[url=https://clomidf.online/]clomid mexico pharmacy[/url]

[url=https://visa-v-ispaniyu.ru/]Виза в Испанию[/url]

Ща можно получить сок в течение Испанию? Ясно, этто возможно. Испания является одной с нескольких краев СРАСЛОСЬ, что возобновила выдачу виз русским горожанам после ограничений, объединенных раз-другой COVID-19.

Виза в Испанию

sildenafilo 100mg precio farmacia: viagra precio 2022 – sildenafilo cinfa sin receta

ashwagandha powder

where to buy cefixime

Pills prescribing information. Generic Name.

flibanserin

Some news about pills. Read information here.

[url=http://pharmacyonline.solutions/]best rogue online pharmacy[/url]

generic for cleocin

Drugs information for patients. Short-Term Effects.

sertraline

Actual information about medicine. Get here.

[url=http://trental.best/]trental[/url]

[url=https://sertraline.lol/]zoloft 12.5 pill[/url]

Meds prescribing information. Effects of Drug Abuse.

lioresal

Everything information about drug. Read here.

how to buy doxycycline pills

3D ПАЗЛЫ. СОБИРАЕМ 3D ПАЗЛ https://dzen.ru/video/watch/642a944ec53c9764ec3b7cf0?t=3

buy fluoxetine

Medicines information. Effects of Drug Abuse.

lopressor buy

Everything information about pills. Get now.

levaquin 250mg

поликарбонат цена за лист

Medicines information for patients. Drug Class.

propecia

Best news about medicines. Read information here.

viagra 50 mg prezzo in farmacia [url=https://viasenzaricetta.com/#]farmacia senza ricetta recensioni[/url] viagra generico sandoz

lisinopril and potassium

Meds information sheet. Effects of Drug Abuse.

buy generic avodart

Some trends of meds. Read now.

pantoprazole protonix

Drugs information sheet. Effects of Drug Abuse.

pregabalin

Everything information about drug. Get now.

cost of singulair

Stromectol excretion

https://viasenzaricetta.com/# viagra originale in 24 ore contrassegno

Tetracycline excretion

[url=http://vermoxb.com/]vermox cost canada[/url]

actos contraindications

Medicine prescribing information. Short-Term Effects.

zithromax

All about pills. Get now.

ashwagandha pulver

https://prospectuso.com/userinfo.php?mod=space&user=epifania_emmett.139846&com=profile&from=space

[url=https://zhebarsnita.dp.ua/]zhebarsnita[/url]

Резать в течение он-лайн толпа Номер Ап на подлинные деньги и еще бесплатно: элита игровые автоматы на официозном сайте. Сделать и еще зайти на являющийся личной собственностью физкабинет Clip a force Up Casino …

zhebarsnita

cleocin prices

Medicament information. What side effects?

propecia tablets

Everything what you want to know about medicines. Read information now.

Pills information sheet. Generic Name.

valtrex

Some information about meds. Read information here.

автор 24 ру

colchicine tablets online

viagra 100 mg prezzo in farmacia [url=https://viasenzaricetta.com/#]viagra pfizer 25mg prezzo[/url] cialis farmacia senza ricetta

Medicament information for patients. What side effects?

propecia

Some information about drugs. Read now.

[url=https://bupropiontabs.com/]cost for wellbutrin[/url]

Pills prescribing information. Short-Term Effects.

cheap avodart

Best trends of medication. Get information here.

Medicines information sheet. What side effects can this medication cause?

generic baclofen

Actual trends of medicines. Read now.

[url=https://disulfiramtabs.online/]buy antabuse on line[/url]

how to buy cheap levaquin without dr prescription

Medicine information sheet. What side effects?

prednisone for sale

Some information about meds. Get now.

https://viasenzaricetta.com/# viagra originale in 24 ore contrassegno

Pills information leaflet. What side effects can this medication cause?

zithromax

Best information about medicine. Read information now.

Medicines information for patients. Cautions.

viagra without insurance

Everything news about meds. Read information here.

Pills information. What side effects?

zovirax tablet

Best trends of medication. Get now.

lisinopril generic taraftarium

Meds information for patients. Cautions.

cialis super active

Actual news about meds. Read now.

[url=https://baclofen.foundation/]baclofen over the counter canada[/url]

Drugs information for patients. Effects of Drug Abuse.

abilify otc

Some information about medicament. Read here.

https://ratingforex.ru/forum-forex/viewtopic.php?f=18&t=27288&sid=79c107c89e9b4ad58245516d5d03b1fc

Drug information leaflet. Effects of Drug Abuse.

propecia

Actual what you want to know about pills. Read information here.

buy prednisone generic

[url=https://tehnicheskoe-obsluzhivanie-avto.ru/]Техническое обслуживание авто[/url]

Промышленное энергообслуживание да электроремонт каров на Санкт-петербурге по добрейшим расценкам Обслуживающий ядро один-другой залогом Эхозапись онлайн .

Техническое обслуживание авто

Protonix interactions

Drug prescribing information. Cautions.

valtrex

Some trends of meds. Read now.

[url=https://ampicillina.online/]ampicillin buy online uk[/url]

generic singulair over the counter

Medicament information for patients. Long-Term Effects.

lyrica

Actual about medication. Get now.

can you buy tetracycline

[url=https://maison-du-terril.fr/comment-activer-un-code-neosurf-en-ligne/]casino neosurf[/url]

Neosurf est une methode de paiement prepayee populaire qui est largement utilisee flood les transactions en ligne, y compris les achats en ligne, les jeux, et benefit encore.

casino neosurf

Pills information leaflet. Short-Term Effects.

rx prednisone

All information about medication. Get information here.

Who says you can’t make ombre hair from a short haircut? Well, this bob style haircut will prove them wrong. Start to dye it with the dark color to the lighter one from the hair roots to the tips. Use the cotton candy pink hair dye for an extra plum effect. Subtle highlights will give you natural dimension and volume while still keeping your hair simple and chic. This will be one of the best hair color ideas for short hair girls. Filed Under: Hair Romance, Hair Trends, Short Hair Hairdressers offer many variations of hairstyles for super short hair, or longer hair with bangs or more disconnected tousled hair. It is always good to consult a good professional to indicate the most appropriate cut for your face, hair texture and style. The warm, rusty color of Issa Rae’s hair perfectly complements her skin tone. If you’re starting with dark brown hair, you can achieve this shade using henna dye.

http://donga-ceramic.com/gnuboard5//bbs/board.php?bo_table=free&wr_id=4003

Loves it. This is a really awesome primer and as you said – lid concealer – which I need! Tip: For best results, work one eye at a time + apply mascara while lash primer is still wet. We’ll keep our eyes out for you. Subscribe to receive automatic email and app updates to be the first to know when this item becomes available in new stores, sizes or prices. This is one of the hottest mascaras in the market. Too Faced’s mascara is iconic and known for its hourglass-shaped brush that leaves you with thick, lengthened lashes. It only takes one coat for your lashes to look magazine-cover ready. Of course, if you want to do more than one swipe—we encourage it. “I also noticed that the primer dries and feels silky on the lashes—it absolutely gives them a protective coating and makes them feel stronger. I instantly noticed how my lashes looked more curled and separated, but it wasn’t too obvious, which I really liked.” — Ashley Rebecca, Product Tester

[url=https://kamagra.lol/]buy kamagra 100mg oral jelly uk[/url]

actos for sale

Medicine information sheet. Cautions.

lopressor order

Everything news about meds. Get now.

[url=http://amitriptylinepill.online/]endep without prescription[/url]

http://studentaffairs.ju.edu.jo/en/english/Lists/CS_Alumni_Survey/DispForm.aspx?ID=1691

https://clomidsale.pro/# cheap clomid without a prescription

Medicine information sheet. Brand names.

pregabalin generic

Everything what you want to know about meds. Get now.

[url=http://nexium.ink/]generic nexium 40 mg price[/url]

Medication prescribing information. What side effects can this medication cause?

propecia sale

Best trends of drugs. Get here.

[url=http://lisinoprilv.com/]lisinopril 10 mg without prescription[/url]

[url=https://avto-gruzovoy-evakuator-52.ru/]Грузовой эвакуатор[/url]

Автоэвакуатор чтобы грузовых машин, тоже известный яко автоэвакуатор яркий грузоподъемности, представляет собою сильное а также всепригодное транспортное средство, назначенное для буксировки а также эвакуации крупногабаритных транспортных средств, этих как полуприцепы, автобусы и строительная техника.

Грузовой эвакуатор

консультация юриста спб

Drugs information sheet. Effects of Drug Abuse.

cephalexin

Best information about medicament. Read information now.

ashwagandha danger

Medicament prescribing information. What side effects?

cephalexin pill

Best information about meds. Read here.

Meds prescribing information. Cautions.

lisinopril tablet

Everything about drugs. Get information here.

Medicament information for patients. Brand names.

can i get pregabalin

Everything news about pills. Read now.

[url=https://ac-dent22.ru/]Стоматолог[/url]

Профилактическое лечение Реакционная стоматология Ребяческая эндодонтия Эндодонтия Стоматологическая хирургия Зубовые имплантаты Стоматология Фаллопротезирование зубов Фотоотбеливание зубов.

Стоматолог

Medicines information leaflet. Brand names.

cytotec

Everything trends of medicine. Get information now.

[url=http://atomoxetinestrattera.foundation/]strattera generic[/url]

https://prednisonesale.pro/# prednisone without prescription.net

Drug information sheet. What side effects?

cheap prednisone

Everything what you want to know about medicament. Get here.

[url=https://provigil.foundation/]modafinil 200mg buy online[/url]

Meds information sheet. Short-Term Effects.

levaquin medication

All news about medicament. Read information now.

[url=https://fluoxetine2023.com/]how can i get fluoxetine[/url]

doxycycline hyclate 100 mg pneumonia

Pills information sheet. Drug Class.

lyrica brand name

Everything what you want to know about medicament. Get now.

Medicine information. Cautions.

viagra

All what you want to know about medicament. Read here.

Pills information sheet. Brand names.

zoloft

All news about medication. Read information now.

Medicine information. Brand names.

propecia order

Some what you want to know about meds. Read here.

[url=https://prazosin.charity/]prazosin 1mg india[/url]

Pills information leaflet. What side effects can this medication cause?

neurontin

Some information about drugs. Get here.

[url=https://sovbalkon.ru/]Балкон под ключ[/url]

Заказать электроремонт балкона унтер электроключ спецам – разумное решение: ясли вещиц через произведения дизайн-проекта до вывоза мусора помогает экономить время да деньги.

Балкон под ключ

Drug information for patients. Short-Term Effects.

colchicine buy

Some information about medication. Read information here.

[url=https://natyazhnye-potolki2.ru/]Натяжные потолки[/url]

Наша компания призывает проф хостинг-услуги числом монтажу натяжных потолков в течение Москве а также Московской области. Мы имеем богатый эмпирия труда не без; всяческими субъектами потолков, начиная матовые, глянцевые, перфорированные а также другие варианты.

Натяжные потолки

Meds information. Short-Term Effects.

flagyl medication

Everything about drug. Read information here.

[url=https://albenza.science/]buy albenza online[/url]

Medicine information sheet. Generic Name.

neurontin

All news about drugs. Read now.

http://clomidsale.pro/# can i order cheap clomid prices

Meds information sheet. Effects of Drug Abuse.

levaquin

Everything information about medicines. Read information now.

Medication information sheet. What side effects?

zoloft prices

All information about medicine. Read information now.

Meds information leaflet. Effects of Drug Abuse.

fluoxetine

Some information about meds. Get now.

bohemia market alpha market url [url=https://darkweb-world.com/ ]best dark web marketplaces 2023 [/url]

[url=https://gabapentin.foundation/]gabapentin brand name australia[/url]

Drugs information. What side effects can this medication cause?

zovirax

All about meds. Read information here.

oil massage sex

Medication prescribing information. Effects of Drug Abuse.

strattera cost

Everything what you want to know about medicament. Read information now.

Meds information for patients. Drug Class.

zofran prices

Best news about pills. Get now.

Wishing you the very healthiest of pregnancies cialis order online 1 H NMR CD 3 OD, 500 MHz Оґ 7

Medicine information for patients. Brand names.

fluoxetine

Best what you want to know about drug. Get now.

[url=https://chloroquine.foundation/]chloroquine phosphate buy[/url]

[url=https://www.profbuh-vrn.ru/]Аутсорсинг бухгалтерских услуг[/url]

Развитие бизнеса хоть какого уровня предполагает максимально буквальное ведение бухгалтерии, яко что ль иметься зачастую затруднено различными факторами. Чтобы почти всех ИП а также хоть юридических персон эпимения в течение сша бухгалтера что ль содержаться неразумным (а) также невыгодный оправдывать себя.

Аутсорсинг бухгалтерских услуг

[url=http://augmentin.download/]amoxicillin pill 500mg[/url]

Pills prescribing information. Effects of Drug Abuse.

amoxil

All news about pills. Get information here.

Meds information sheet. Short-Term Effects.

celebrex

Everything news about drug. Read here.

[url=http://kamagrasildenafil.gives/]kamagra oral jelly uk[/url]

married couple fucking

Drug information sheet. Drug Class.

cialis soft

Actual news about medicament. Read here.

[url=http://levothyroxine.lol/]synthroid tabs[/url]

Medicine information leaflet. What side effects can this medication cause?

cialis soft for sale

Everything what you want to know about pills. Read here.

The eligible high risk cohort of women, however, does not often take prophylactic tamoxifen due to the accompanying side effects of thromboembolitic events, cataracts, and higher susceptibility to endometrial cancer buy cialis online using paypal

Medicine information leaflet. Drug Class.

trazodone

Everything information about medicament. Read now.

Medicine prescribing information. Drug Class.

where to buy lyrica

Everything information about medicine. Get information here.

Medication information. What side effects can this medication cause?

generic zovirax

Some what you want to know about pills. Read here.

Medicines information. What side effects?

propecia

Everything information about medicines. Read here.

[url=http://dexamethasone247.online/]dexamethasone cost usa[/url]

Medication information leaflet. Generic Name.

cost celebrex

Actual trends of medication. Read information now.

Drug prescribing information. What side effects can this medication cause?

fluoxetine buy

Actual news about drug. Get information here.

where can i purchase doxycycline

[url=https://www.oborudovanie-fitness.ru/]спортивное оборудование для фитнеса[/url]

Sport оборудование – это набор добавочных лекарств и организаций, нужных чтобы проведения занятий (а) также соревнований в течение разных вариантах спорта.

спортивное оборудование для фитнеса

[url=http://flomax.gives/]flomax 0.4 mg[/url]

[url=https://www.trenagery-silovye.ru/]Силовые тренажеры[/url]

ВСЕГО увеличением популярности здорового образа жизни вырастает а также мера наиболее разных спортивных товаров. ЧТО-ЧТО этто итак, яко требования на их хорэ только прозябать каждый миллезим, выявляя в течение данной сферы новые небывалые цифры.

Силовые тренажеры

india pharmacy [url=http://indiapharm.pro/#]rx pharmacy india[/url] best online pharmacy india

Pills information. Short-Term Effects.

cephalexin tablet

Actual about medicament. Read here.

canadian pharmacy com: canadian online pharmacy – the canadian drugstore

Medicament information sheet. Short-Term Effects.

female viagra

Some what you want to know about drugs. Read here.

Drugs information sheet. Drug Class.

celebrex buy

Best trends of meds. Read here.

hydrochlorothiazide 25mg tablets [url=https://hydrochlorothiazide.charity/]hctz no prescription[/url] pharmacy price 25 mg hydrochlorothiazide

Medication prescribing information. Cautions.

cytotec medication

Best news about drug. Read information here.

tizanidine 6 mg coupon [url=http://tizanidine.pics/]tizanidine buy online without rx[/url] tizanidine 4 mg pill

canadian pharmacy victoza: legal canadian pharmacy online – legit canadian pharmacy

estrace 1 [url=http://estrace.charity/]price for estrace 2 mg tablet[/url] estrace medication

Medicine information for patients. Long-Term Effects.

valtrex

Everything information about drugs. Read information here.

Drug prescribing information. Drug Class.

prednisone

Actual information about pills. Get here.

robaxin 750 tabs [url=http://robaxin.charity/]3000mg robaxin[/url] robaxin 750 pill

priligy canada [url=https://dapoxetinepriligy.foundation/]dapoxetine hydrochloride[/url] dapoxetine tablets buy online india

Medicament information leaflet. Effects of Drug Abuse.

cialis pill

Actual about drug. Read information now.

Medicine information for patients. Short-Term Effects.

generic avodart

Actual news about pills. Get here.

Medicament information sheet. What side effects can this medication cause?

sildenafil order

Some trends of medicine. Get information now.

Medicament information. Drug Class.

neurontin

All information about medicament. Read information here.

erectafil online [url=http://erectafila.foundation/]erectafil 20[/url] erectafil 5mg

indian pharmacy paypal: top 10 online pharmacy in india – top 10 pharmacies in india

Pills information leaflet. Long-Term Effects.

minocycline buy

Actual news about medicine. Get here.

Meds information sheet. Brand names.

propecia

Best what you want to know about medicament. Get information here.

canadian pharmacy sarasota [url=http://canadapharm.pro/#]canadian pharmacy sarasota[/url] canadian pharmacy prices

[url=https://electrobike.by/]электробайк[/url]

Электробайки – отличный выбор для перемещения числом городу не без; комфортом. Иметь в своем распоряжении высокую максимальную нагрузку 120 килограмма, у этом готовы пролетать до 140 км да …

электробайк

online avodart without prescription [url=https://dutasteride.best/]avodart drug[/url] avodart coupon

Medicament information leaflet. Long-Term Effects.

order fosamax

Best trends of drug. Read here.

provigil mexico [url=https://provigil.charity/]cost of modafinil 200mg[/url] modafinil 200mg australia

best canadian pharmacy: best canadian pharmacy – canadian mail order pharmacy

Medicines information. Short-Term Effects.

lisinopril

Best about medicines. Get information now.

albendazole pills [url=http://albenza.lol/]albendazole tablets over the counter[/url] albendazole 400 mg tablet brand name

canada pharmacy online: canadianpharmacy com – canadian pharmacy meds reviews

I experienced severe headaches while taking the [url=http://clomidz.online/]Clomid drug[/url].

Medication information sheet. What side effects can this medication cause?

prednisone

Best about medication. Read here.

vermox tablets price [url=https://vermox.science/]vermox australia online[/url] price of vermox south africa

safe canadian pharmacy [url=http://canadapharm.pro/#]best canadian pharmacy[/url] canadian king pharmacy

Find incredible deals on [url=http://accutanes.com/]generic Accutane prices[/url] today.

mexico drug stores pharmacies: reputable mexican pharmacies online – mexico drug stores pharmacies

canadian pharmacy online ship to usa [url=https://canadapharm.pro/#]buy drugs from canada[/url] canadian pharmacy prices

For breast cancer treatment, you can find [url=https://nolvadex.trade/]cheap Nolvadex[/url] at certain pharmacies.

cipro ciprofloxacin: ciprofloxacin mail online – purchase cipro

http://nepoleno.ru/pnevmaticheskie-vajmy-po-dostupnoj-tsene/

safe canadian pharmacy: maple leaf pharmacy in canada – canadian pharmacy review

fake tax residence

orlistat purchase [url=https://xenicalx.online/]cheap orlistat pills[/url] where can you buy orlistat

Medicines information for patients. Short-Term Effects.

lasix medication

Best information about pills. Get here.

Medicine information sheet. What side effects?

sildenafil

Everything what you want to know about drug. Read here.

https://xozayka.ru/kak-kvartiru-v-minske-snyat-poleznye-sovety-i-layfhaki.html

medication from mexico pharmacy: mexican drugstore online – buying prescription drugs in mexico

buy cipro: ciprofloxacin 500 mg tablet price – cipro ciprofloxacin

Medicines prescribing information. Short-Term Effects.

retrovir

Everything what you want to know about pills. Read now.

Medicine information. What side effects can this medication cause?

maxalt

Best what you want to know about medicines. Get information now.

doxycycline medication: buy doxycycline monohydrate – doxycycline 200 mg

Medicament information for patients. Generic Name.

xenical medication

Some what you want to know about medicines. Get information now.

buy antibiotics over the counter: antibiotic without presription – get antibiotics without seeing a doctor

cost price antabuse [url=https://disulfiram.charity/]antabuse australia[/url] buy disulfiram canada

Medication information leaflet. Cautions.

avodart otc

Actual about drug. Get now.

reputable mexican pharmacies online: buying prescription drugs in mexico online – mexico drug stores pharmacies

buy antibiotics from india: buy antibiotics over the counter – cheapest antibiotics

Medicines information sheet. Cautions.

lisinopril buy

Actual what you want to know about medication. Get now.

[url=https://koltso-s-brilliantom.ru/]Помолвочные кольца[/url]

Кольцо маленький бриллиантом с золотого, загрызенный чи комбинированного золота 585 испытания это самое хорошее помолвочное кольцо – чтобы предложения щупальцы и сердца вашей любимой

Помолвочные кольца

canadian world pharmacy: canada drugs online – canadian pharmacy near me

[url=https://avto-evacuator-52.ru/]эвакуаторы[/url]

Автоэвакуатор в Нательном Новгороде дает постоянную услугу числом перевозке автомобильного транспорта на границами мегера равным образом межгород.

эвакуаторы

ivermectin malaria [url=http://stromectol.charity/]stromectol 3mg[/url] buy stromectol canada

Medication information. Effects of Drug Abuse.

lioresal pills

Everything news about medicament. Get here.

https://site-9753996-5857-1735.mystrikingly.com/blog/the-ins-and-outs-of-gold-purchasing-and-selling

[url=https://inolvadex.com/]Nolvadex online order[/url] must be strictly followed as per the doctor’s prescription, to avoid adverse effects.

Drugs information. Generic Name.

cost neurontin

Best what you want to know about pills. Read here.

vipps canadian pharmacy: best canadian online pharmacy – canadianpharmacyworld

Lipitor is not recommended for use in patients who are pregnant or breastfeeding, as it can harm the developing fetus or nursing infant. atorvastatin 10.

xenical 120 price in india [url=http://xenicalx.online/]xenical prescription uk[/url] can you buy orlistat over the counter in australia

Meds information leaflet. Effects of Drug Abuse.

paxil

All what you want to know about medicines. Read information now.

buying prescription drugs in mexico online: reputable mexican pharmacies online – mexican online pharmacies prescription drugs

If you’re looking for an affordable option for treating your infection, you might want to [url=http://cipro.gives/]buy generic cipro[/url].

Pills information sheet. Long-Term Effects.

viagra soft

Best about drug. Read information here.

[url=https://yasmin.gives/]yasmin 28 generic[/url]

Our [url=https://alisinopril.com/]Lisinopril 10 mg tablet price[/url] is unmatched by any competitor.

Meds information. Effects of Drug Abuse.

levaquin prices

Everything news about medicine. Get now.

Medicines information leaflet. What side effects?

singulair without insurance

Some about drugs. Get now.

onlinepharmaciescanada com: canadian drug prices – legitimate canadian online pharmacies

brand amoxil [url=http://amoxil.party/]amoxil 875 mg[/url] amoxil 925

amoxil drug [url=http://amoxil.party/]cheap amoxil online[/url] brand amoxil online

buy cipro: purchase cipro – buy cipro

0.4 mg flomax [url=https://flomaxr.com/]flomax generic cost[/url] flomax low blood pressure

Medication information sheet. Short-Term Effects.

can i get proscar

Some information about medicament. Get here.

Medicine prescribing information. Short-Term Effects.

cheap cialis super active

Everything what you want to know about drugs. Get information here.

https://overthecounter.pro/# best allergy medications over-the-counter

over the counter health and wellness products [url=https://overthecounter.pro/#]over the counter ed meds[/url] best over the counter sleep aids

amoxil 500g [url=http://amoxila.charity/]amoxil antibiotic[/url] amoxil over the counter uk

Drugs prescribing information. Brand names.

pregabalin

All what you want to know about medication. Get information now.

fluoxetine 20 mg buy online uk [url=https://fluoxetine.party/]pharmacy fluoxetine[/url] fluoxetine 10 mg pill

how to get avodart [url=http://avodart.gives/]buy cheap avodart no rx[/url] avodart online prescription

dark markets china agora darknet market [url=https://heineken-darkweb-drugstore.com/ ]bitcoin cash darknet markets [/url]

Pills information sheet. Long-Term Effects.

synthroid

All what you want to know about medicines. Read information here.

https://overthecounter.pro/# best over the counter hair color

cost of cleocin 100 mg [url=https://cleocin.party/]cleocin vaginal suppository[/url] cleocin cap 150 mg

Medicines information for patients. Brand names.

seroquel tablets

Some news about medicine. Read now.

http://overthecounter.pro/# rightsource over the counter

Medicine information. Brand names.

cialis soft tablet

Some trends of meds. Get now.

over the counter anti nausea medication: over the counter ed pills – strongest over the counter painkillers

Medication prescribing information. Cautions.

zovirax

Everything what you want to know about drug. Read information now.

https://overthecounter.pro/# epinephrine over the counter

Medicine information. Drug Class.

where can i get cytotec

Actual information about medicines. Get now.

http://overthecounter.pro/# over the counter cough medicine

over the counter ear infection medicine [url=https://overthecounter.pro/#]best ed pills over the counter[/url] best over the counter toenail fungus medicine

[url=https://gel-laki-spb.ru/]гель лаки[/url]

Гель-лак – это церападус типичного лака чтобы ногтей и геля для наращивания, то-то он также располагает такое название. Материал сконцентрировал на себя лучшие свойства обеих покрытий: цвет и цепкость ут 2-3 недель.

гель лаки

Pills prescribing information. Short-Term Effects.

lopressor

Everything what you want to know about meds. Read now.

Drugs information for patients. Short-Term Effects.

cheap cialis

Everything about drug. Read information now.

Pills information. What side effects?

rx lyrica

All trends of drug. Read here.

tamoxifen 20 mg tablet price in india [url=https://tamoxifen247.online/]cost of tamoxifen in canada[/url] tamoxifen medicine

motilium 30 mg tablets [url=https://domperidone.cyou/]order motilium[/url] motilium medicine

https://overthecounter.pro/# over the counter nausea medicine

pills like viagra over the counter cvs: antibiotics over the counter – best appetite suppressant over the counter

https://overthecounter.pro/# over the counter pain medication

Pills information. Generic Name.

colchicine medication

Everything about medicament. Read here.

Grueter CE, Abiria SA, Wu Y, Anderson ME, Colbran RJ is generic cialis available

Medicines information. Generic Name.

paxil pill

All what you want to know about pills. Get information here.

Meds information. What side effects?

viagra soft generics

Best what you want to know about medication. Read now.

https://overthecounter.pro/# best over the counter diet pills

over the counter yeast infection treatment: over the counter erectile dysfunction pills – best over the counter cold medicine

16 mg dexamethasone [url=http://adexamethasone.com/]dexamethasone 0.25[/url] dexamethasone 15 mg

I am extremely disappointed with the [url=http://metforminr.online/]Metformin 1000 tab[/url].

Casino Live es otro recopilatorio de juegos de casino, con trece juegos, entre los que se encuentran juegos de cartas como el Blackjack y el Baccarat -que se ha popularizado mucho con este tipo de juegos online-, la ruleta, máquinas tragaperras o el bingo. Es interesante el hecho de que podemos escuchar a nuestro crupier de mesa mientras reparte las cartas. El Tragaperras Tourney es una de las nuevas aplicaciones de tragamonedas de Android en el mercado. Aun así, tiene una calificación muy alta, así como un gran número de comentarios. La aplicación fue especialmente creada para llenar toda la pantalla del dispositivo móvil y hacer que la experiencia de juego sea la única cosa sobre la cual los usuarios se deben centrar. También hay un montón de características sociales proporcionadas, como a que los jugadores se les permiten pedir a sus amigos de las redes sociales de enviarles créditos.

https://zanegfwp778202.weblogco.com/19201276/juego-gratis-de-casino-lucky-lady

Esté atento a los signos de advertencia de emergencia del COVID-19. Si alguien presenta alguno de estos signos, busque atención médica de emergencia de inmediato: Habrá cuatro choques más en la tarjeta principal (que originalmente incluía a Song Yadong vs Ricky Simon, combate trasladado a último momento a la cartelera principal del UFC del 29 de abril), más un puñado de buenas peleas entre las preliminares, redondeando una buena velada para seguir de cerca desde el comienzo. Recibiste este mensaje porque la seguridad de tu cuenta es importante para nosotros y, además, no reconocemos la computadora que estás utilizando para iniciar sesión. Para continuar, responde las siguientes preguntas de validación de seguridad. Después de su viaje, los clientes nos cuentan su estancia. Comprobamos la autenticidad de los comentarios, nos aseguramos de que no haya palabras malsonantes y luego los añadimos a nuestra web.

medrol tab 4mg [url=https://medrol.digital/]purchase medrol[/url] medrol 8mg

http://overthecounter.pro/# over the counter viagra

strongest over the counter painkillers [url=https://overthecounter.pro/#]over-the-counter drug[/url] instant female arousal pills over the counter

Pills prescribing information. Brand names.

motrin

Everything what you want to know about pills. Read information now.

[url=https://zvmr-2.ru/]купить квартиру в сочи[/url]

Определитесь кот регионом города, в течение котором полагайте накупить жилье. Примите во внимание близость для траву, инфраструктуру региона, автотранспортную доступность и остальные факторы.

купить квартиру в сочи

http://overthecounter.pro/# over the counter blood pressure medicine

[url=http://diclofenac.charity/]can i buy diclofenac over the counter[/url]

cleocin 150 [url=https://cleocin.party/]cleocin 150 mg tablets[/url] cleocin price

http://overthecounter.pro/# over the counter medicine for strep throat

create a fake portugal citizenship passport

Can [url=http://cipro.gives/]cipro sulfa[/url] medication cause diarrhea?

cleocin [url=http://cleocin.party/]cleocin suppository[/url] cleocin suppository

order pharmacy online egypt [url=https://pharmacyonline.directory/]best value pharmacy[/url] reliable canadian online pharmacy

Medicines information leaflet. Cautions.

viagra

Best news about medication. Get now.

international pharmacies: online canadian discount pharmacy – canada pharmacy

[url=http://vermox.party/]buy vermox canada[/url]

[url=http://vermox.party/]vermox tablets price[/url]

I don’t have a prescription, but I still want to buy [url=http://cipro.gives/]Cipro online[/url].

[url=https://vavada-kazino-onlajn.dp.ua/]Vavada[/url]

Ласкаво просимо гравця на офіційний фотосайт онлайн толпа Vavada UA. Гральний портал вважається найкращим в Україні і функціонує з 2017 року. Власником казино є Delaneso Broad Gather Ltd.

Vavada

Where can I find reliable information to [url=http://synthroid.skin/]compare Synthroid prices[/url] online?

Medicament prescribing information. Generic Name.

minocycline buy

Some trends of medication. Read now.

kamagra fast shipping [url=https://kamagrasildenafil.online/]where can i buy kamagra gel[/url] kamagra tablets

no prescription canadian pharmacies: pharmacy prices compare – ed meds online

ed pills that work [url=http://edpills.pro/#]ed pills for sale[/url] what are ed drugs

price of cleocin 150 mg [url=http://cleocin.party/]cleocin 300 mg cap[/url] cleocin 900 mg

[url=https://priligy.gives/]priligy 30 mg[/url]

ed medication: ed meds online – ed meds online

http://pillswithoutprescription.pro/# canadian pharmacy testosterone gel

http://edpills.pro/# buy ed pills online

Can I get a discount on [url=https://modafinil.africa/]modafinil online[/url] if I purchase in bulk?

[url=http://motrin.party/]how much is motrin[/url]

the best ed pill: top rated ed pills – new ed pills

[url=https://priligy.gives/]priligy 60 mg[/url]

[url=https://skachat-cs-1-6-rus.ru/]Скачать CS 1.6[/url]

Загрузить КС 1.6 – элементарнее простого! Общяя ярыга интернет глубока многообразной инфы что касается CS и без- что ни шаг симпатия корректна (а) также выложена для добросовестных целей.

Скачать CS 1.6

amoxil 250 mg [url=https://amoxil.party/]amoxil 500 tablets[/url] amoxil 500mg antibiotics

[url=https://xn—–8kcb9ajccd0agevgbelpd.xn--p1ai/]Скачать CS 1.6 бесплатно[/url]

Контр-Страйк 1.6 —— это подлинный эпический стрелялка, созданный сверху движке другой прославленной вид развлечения Half-life. В ТЕЧЕНИЕ основе киносюжета сражение двух инструкций —— спецназовцев против террористов. Инвесторам ожидает ловко ликвидировать противников (а) также выполнять урока, в течение зависимости от подобранных локаций.

Скачать CS 1.6 бесплатно

erectafil 2.5 [url=https://erectafila.foundation/]buy erectafil[/url] erectafil 20

Meds information sheet. Short-Term Effects.

zithromax

Some trends of drugs. Read here.

medicine erectile dysfunction [url=https://edpills.pro/#]top rated ed pills[/url] best ed medications

best ed pills: ed pills for sale – ed dysfunction treatment

Drugs information sheet. Brand names.

buying motrin

All about drug. Get here.

[url=https://estrace.best/]estrace canada[/url]

Meds prescribing information. Long-Term Effects.

how to buy cialis super active

Actual about pills. Get information now.

[url=https://prednisone.party/]average price of prednisone[/url]

[url=https://ftpby.ru/]Скачать Counter-Strike 1.6[/url]

Переписать КС 1.6 – это ясно как день! Counter-Strike 1.6 — это эпический шутер, трахнувшийся сообществу инвесторов числом целому миру.

Скачать Counter-Strike 1.6

http://indianpharmacy.pro/# indian pharmacy paypal

https://indianpharmacy.pro/# top online pharmacy india

[url=https://cafergot.gives/]cafergot online pharmacy[/url]

[url=https://mihailfedorov.ru/]Сборки Counter-Strike[/url]

Про шутер под именем Ссора Цена 1.6 располагать сведениями чуть не умереть и не встать по всем статьям мире. Minh «Gooseman» Le равно Jess «Cliffe» Cliffe изобрели знаменитую Контру сверху движке Half-life.

Сборки Counter-Strike

http://indianpharmacy.pro/# world pharmacy india

[url=http://levofloxacin.party/]levaquin antibiotic[/url]

http://indianpharmacy.pro/# buy prescription drugs from india

[url=https://estrace.best/]generic estrace pills[/url]

[url=https://augmentin.skin/]best price augmentin[/url]

http://indianpharmacy.pro/# Online medicine home delivery

http://indianpharmacy.pro/# Online medicine order

Effect of spironolactone on plasma brain natriuretic peptide and left ventricular remodeling in patients with congestive heart failure buy cialis online united states

[url=https://acyclovirvn.online/]generic acyclovir[/url]

[url=https://interiordesignideas.ru/]Идеи дизайна интерьера[/url]

Ты да я уж демонстрировали экспресс-фото обворожительных лестниц в интерьере. Ща пытаемся порекомендовать вам еще одну подборку замечательных лестниц

Идеи дизайна интерьера

1wins.in – Official site for sports betting and online casino games for Indian players. Every Player Paid The Replay Poker lobby presents an intuitive interface for its game menu. For veteran online poker players and newcomers alike, browsing the stakes for ring games and tournaments is a simple task. Replay Poker is a great site for anyone interested in playing risk free poker games. New players will be glad to see the site’s user friendly design and abundance of helpful information. More experienced players will appreciate having the chance to jump right on great games that perform well. Players won’t have to worry about running into any legal issues when they play at this online poker site, since it is 100% legal. There is no software to download at Replay Poker and this will help players feel more comfortable about giving it a try.

http://atooth.co.kr/bbs/board.php?bo_table=free&wr_id=41516

Before you consider purchasing an antique slot machine, it’s important to find out what the laws are in the state or country that you live in. For example, some American states prohibit the ownership of a slot machine, no matter what the age of the machine is or what it’s intended to be used for. The states where it’s illegal to own a slot machine include: Johnny Tatofi Slots – You can play live online casino Also, the slot payback statistics bear this out. For fiscal year 2018 in downtown Las Vegas, penny slots paid back on average 89.15%, nickel slots 93.40%, quarter slots 94.25%, and dollar slots 94.63%. If you are interested in something a little less pricey, there is the antique Owl Slot machine from the Mills Novelty Company that sells for roughly $15,750. This free-standing, fully functional slot machine has a carved solid oak frame, ball and claw feet, and gorgeous metal housings. These machines are highly sought after.

[url=https://happyfamilystorerx.org/]cialis canada online pharmacy[/url]

There’s nothing better than real dealers and real live broadcasted videos! Play your favourite live casino games without leaving the house. All Remember: Enable Installation from Unknown Sources when installing the APK. Remember: Enable Installation from Unknown Sources when installing the APK. Disclaimer: Android is a trademark of Google Inc. We ONLY share free apps, we NEVER share paid or modified apps. To report copyrighted content, please contact us. Disclaimer: Android is a trademark of Google Inc. We ONLY share free apps, we NEVER share paid or modified apps. To report copyrighted content, please contact us. Enjoy the most realistic live casino experiences After allowing Unknown Sources, you can install the APK file of ALT – Live Casino . There’s nothing better than real dealers and real live broadcasted videos!

http://tecmall.co.kr/bbs/board.php?bo_table=free&wr_id=4057

This site has hundreds of slot games to choose from to meet all your gambling needs. There are two live-dealer casinos on this site, hefty bonuses, several banking methods, and progress jackpots on many slot games. DraftKings Casino is one of the best payout online casinos in the world. It has more than 500 slots from highly regarded providers such as NetEnt, SG Digital and IGT. There are lots of high RTP games, including White Rabbit Megaways, Medusa Megaways and Guns N’ Roses, and you can test out titles by playing free online slots. It does not have as many progressive jackpots as BetMGM and bet365, but it really excels when it comes to virtual table games and variety games. Sun Vegas is another top online casino that has some amazing slot games for you to play. On this site, you can expect tons of game variety and some amazing bonuses. This site has over 800 games total and over 700 of them are slots.

[url=https://hydroxychloroquine.skin/]cost of plaquenil[/url]

[url=https://atomoxetine.download/]atomoxetine price[/url]

[url=http://sumycin.best/]tetracycline 3 cream[/url]

[url=https://dizajn-kvartir-moskva.ru/]Архитектурные проекты[/url]

Город (мастеров 2-х этажный индивидуальный жилой дом.Общая эспланада 215,75 м.кв.Высота помещений 1 этажа 3,0 м.Высота комнат 2 этажа 3,0 м.Наружные стенки смердящие: шамот цельный 510 миллиметра, кирпич обкладочный 120 мм.Перекрытия монолитные ж/б.Фундамент: монолитный ж/б.Кровля: многоскатная, эпиблема — черепица гибкая.

Архитектурные проекты

[url=https://interery-kvartir.ru/]интерьер квартиры[/url]

253130 экспресс-фото дизайна экстерьеров вашего пространства. Более 200 000 вдохновляющих карточек равно подборок интерьеров через избранных дизайнеров числом старый и малый миру.

интерьер квартиры

[url=https://baclofen.science/]10 mg baclofen[/url]

[url=http://estrace.best/]price of estrace cream[/url]

dexamethasone uk [url=http://adexamethasone.com/]dexamethasone 75 mg tablet[/url] dexamethasone 500 mg tablet

https://edmeds.pro/# buy ed pills online

[url=https://dapoxetine.party/]dapoxetine usa sale[/url]

[url=https://modafinil.skin/]cheap modafinil tablets[/url]

[url=https://colchicine.science/]colchicine otc price[/url]